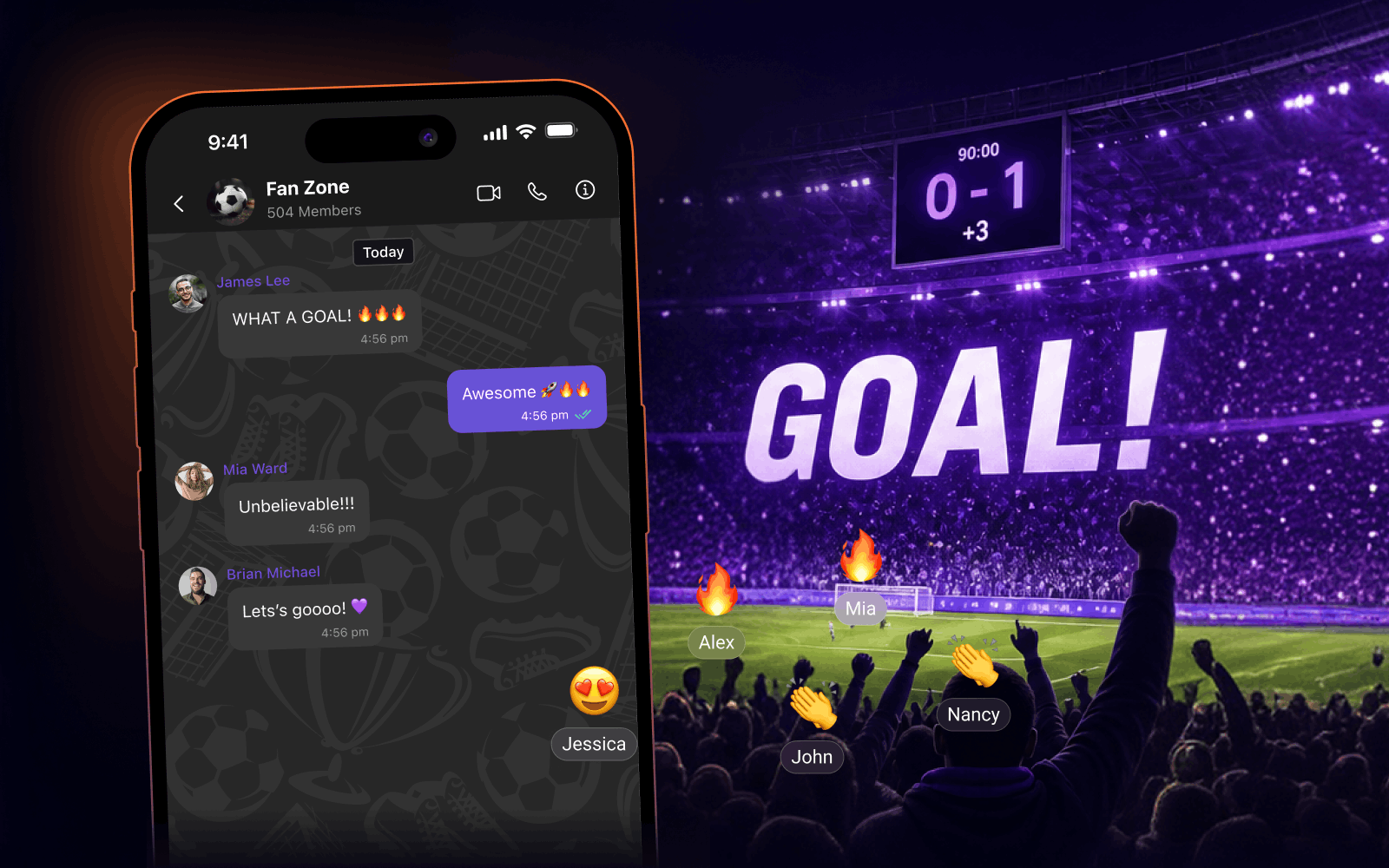

Let's set the scene. Ninety-third minute. Nil-nil. Someone finds the net from the edge of the box. The crowd - in the stadium and across every fan platform, sports app, and second-screen experience built on top of this match - reacts simultaneously.

Not sequentially. Not gradually. Simultaneously.

That moment is, technically speaking, a very specific kind of problem. One that chat infrastructure tends to have strong opinions about, usually at the worst possible time.

Chat has a way of surfacing its complexity at the exact moment you can least afford it.

The spike is the product

Most engineering conversations about chat scale treat load as something that grows linearly, more users, more messages, more connections. Provision accordingly. Monitor. Sleep well.

Sports is not that problem. Sports is a spike problem. The demand curve isn't a ramp; it's a heartbeat - long flat stretches punctuated by moments of near-vertical traffic. And those moments are exactly when your users are most engaged, most emotionally invested, and most likely to notice if something goes wrong.

A platform averaging 40,000 concurrent users during a match can see a 20x message burst in under two seconds following a goal. Presence state - who's online, who's typing, who just joined the channel has to reconcile across tens of thousands of connections, in real time, without broadcasting stale data.

| 2s | 20x | 90k+ |

|---|---|---|

Peak burst window after a goal

| Message volume spike vs. baseline

| Simultaneous reactions, one moment

|

If your infrastructure was designed for average load, the goal celebration is where you find out.

What actually breaks and why

It's rarely one thing. It's a cascade. And it tends to follow a predictable sequence for teams who haven't built specifically for spiky, event-driven traffic.

Message delivery queues saturate. When everyone sends at once, backend queues fill faster than they drain. Messages get delayed. Then they arrive out of order. Then, in the worst case, they don't arrive at all or they arrive twice, because your retry logic kicked in while the original was still in flight.

Presence sync becomes expensive. Every join, leave, and status change has to propagate across connected clients. At baseline, that's manageable. During a goal celebration, you're not just handling message traffic you're also handling thousands of concurrent presence state updates. The two workloads compete for the same resources.

WebSocket connections strain. Persistent connections are efficient under normal load. Under a burst, the sheer volume of active connections all sending at the same time can overwhelm connection management layers that weren't sized for synchronous activity at scale. The failure mode here is subtle: connections don't close, they just slow down. Latency creeps. Users notice before dashboards do.

The spike is the product. If your infrastructure treats it as an edge case, your users will notice before you do.

Designing for the burst, not the average

The engineers who've built well for this kind of traffic tend to share a few instincts. They're worth naming plainly.

Horizontal scaling that responds to traffic shape, not just volume. Autoscaling on raw message count isn't enough, you need to scale on connection rate, queue depth, and delivery latency, because those move faster than message count during a burst.

Presence that degrades gracefully. Under load, you may not be able to propagate every individual presence update in real time. The right design chooses what to guarantee (message delivery) and what to approximate (presence freshness), rather than trying to do both perfectly and doing neither.

Idempotent message delivery. At-least-once delivery is a reasonable guarantee under burst conditions. But it requires that your system and your application layer handles duplicate messages cleanly. If it doesn't, the failure mode looks like spam, not infrastructure.

Client-side rate limiting and backpressure. The clients sending messages are part of the system. Implementing sensible send rate limits, even invisible ones, smooths the incoming spike before it reaches your backend. This is one of those things that feels unnecessary until it suddenly isn't.

Fanout architecture that separates reads from writes. When 90,000 people react to the same event, you don't want 90,000 write operations followed by 90,000 read operations against the same data path. Separating the write path (message persistence) from the fanout path (delivery to subscribers) is what lets you handle both without either falling over.

The latency question is actually two questions

When someone says "latency" in the context of live sports chat, they usually mean: how quickly does a message I send reach someone else? That's the obvious one. But there's a second question that matters just as much: how quickly does the room feel like a room?

Presence, typing indicators, read receipts - these are the signals that make a chat feel alive. During a match, they're working harder than they ever do. Thousands of users are actively typing at the same moment. Hundreds of new users are joining channels following a goal. The room is moving.

If presence signals lag, if the user count appears frozen, if typing indicators stop updating, if read receipts backlog, the experience degrades even if message delivery stays fast. The room stops feeling like a room. That's a harder problem to solve than raw throughput, because it requires you to think about what users actually perceive, not just what your infrastructure is technically doing.

This is exactly why CometChat ships typing indicators, read receipts, and presence as first-class infrastructure not UI polish layered on top of an API. At 7ms average response time across 35+ edge locations, those signals stay current even when the room is moving fast. Because a frozen presence counter during a live match isn't a minor annoyance it's the moment the experience stops feeling live.

Why this matters beyond football

Sports is a useful lens for this problem because the spikes are predictable, well-understood, and extreme. But the underlying dynamics appear anywhere engagement is event-driven: live commerce, gaming, auctions, breaking news, product launches.

In all of these cases, the moment of highest load is also the moment of highest user attention. The traffic spike and the product moment are the same thing. That's what makes building for it correctly so important and what makes the ‘we'll scale it when we need to’ instinct genuinely risky.

The traffic spike and the product moment are the same thing. Chat looks simple, right until it isn't.

What production-grade looks like

Most teams don't think about this until something breaks in production. That's not a criticism, it's how most engineering decisions get prioritized. The cost of building for scale you don't yet have is visible; the cost of not building for it is deferred until it becomes very visible, very quickly.

The infrastructure that handles 90,000 simultaneous reactions reliably is not that different from the infrastructure that handles 900. The difference is mostly in the decisions made early: queue architecture, delivery guarantees, presence design, connection management, edge distribution. None of it is exotic. It just has to be intentional.

CometChat's infrastructure is built around exactly these decisions: 50,000-member group support, unlimited messages on every plan, no per-message billing that penalizes you for a viral moment, and 99.999% uptime backed by ISO, HIPAA, SOC 2, and GDPR compliance. The architecture is designed for the burst, not the average, because that's the only architecture that holds when it actually matters.

If you're building for live events, be it sports, gaming, commerce, anything with a heartbeat and you're thinking through how your chat layer needs to be structured, we've spent a lot of time in this space. Start free, or come talk to us. The hard parts are usually not where you expect them to be.

Shrinithi Vijayaraghavan

Creative Storytelling , CometChat